A New Mexico jury found that the social media giant’s platforms (Facebook, Instagram, and WhatsApp) guilty of not effectively protecting children from being sexually exploited (Threads was not mentioned because it launched in July 2023). It also took the jury just one day before agreeing that Meta should pay $375 million in civil damages for violating state consumer protections and misleading consumers (primarily the parents) about the safety of its platforms (and associated apps/sites). Meta was accused of putting profits over the safety of children.

Back in April 2023, The Guardian published the findings of their 2-year investigation which looked into how the company struggled with preventing predators from using their platforms for child sex trafficking (warning, that article is graphic but should be read to understand how the platform was used for both grooming and trafficking, how Meta claims they are doing all that it takes including working with law enforcement, but being refuted by victims as well as advocates who show accounts with explicit material they have directly reported, where Meta did nothing to rectify those complaints). This also puts into better context this case and the victory by the state/for advocates that are fighting against child sex trafficking. Meta unsurprisingly disagrees with the court verdict/penalty and plans to appeal.

While this isn’t solely a Meta problem (it is social media wide), the greatest percentage of the trafficking has been on Facebook and Instagram respectively (#1 and #2; Threads again was not launched until July 2023 but it would probably rank in the top 5 now). At least 5 prosecutors who were interviewed back then, were angered over what they felt were Meta’s unnecessary delays (where the company would cite technicalities with the format and wording) in complying with judge-signed warrants and subpoenas needed to gather evidence on sex trafficking cases (delays which prevented timely investigations while also slowing down the rescuing of victims). Meta denied these allegations.

One of the people who created the technology (PhotoDNA) that Meta uses to identify certain harmful visual content, professor Hany Farid (University of California, Berkeley) mentioned then that Meta could in-fact do more to identify trafficking; that it wasn’t a technological issue, but one of corporate priorities aka profits (and we see this as machine learning/AI became heavily utilized in this process in recent years where the company struggles with false positives — while not having enough actual people to review/validate the data). In plain language, Meta tries to automate a lot of these processes (while also relying on contractors for various moderation/identification tasks (the investigative article also covers this – more on this below).

A former Facebook data scientist/whistleblower leaked documents (thousands of pages) that confirmed how the company handled harmful content which these moderators identified and claimed that flagged items in closed Facebook groups and/or Facebook Messenger (which the company has added end-to-end encryption), were much harder to get escalated. One of the memos highlighted company guidelines stating that “Messenger groups with less than 32 people should be treated with a full expectation of privacy”. This of course highlights the challenges with platform and messaging privacy (where normally on unencrypted areas, companies usually warn users about conduct regarding illegal activity).

Myself, I’m very much a privacy advocate in terms of ones personal data (it’s why I don’t trust Google or Meta to begin with because if they have a way of harvesting that data, they will try to do it in some round-a-bout fashion. There is even this whistleblower lawsuit alleging that Meta employees are able to access WhatsApp messages despite the fact that it has end-to-end encryption (these allegations made by a contractor, have not been proven while Meta denies this citing it has had end-to-end encryption using the Signal protocol for over a decade).

The investigative piece also covers Section 230 (which prevents internet companies from being held liable for any of the content they host); a piece of legislation that made sense in the earlier days but is most definitely in need of some reform (since the current law doesn’t incentivize these companies from doing anything but the bare minimum/expending as little money/resources as possible).

After this investigate report was published by The Guardian, New Mexico’s attorney general Raúl Torrez conducted an undercover operation (Operation MetaPhile) where officers posed as children on Facebook, Instagram, and WhatsApp (again, Threads was not mentioned because it had not launched yet when this sting operation was happening). The jury heard that these fake profiles were inundated with images and targeted solicitations from child abusers. Three men were eventually arrested amid the sting for attempting to use Meta’s social media platforms to prey on children.

During the trial, Mark Zuckerberg and Instagram chief Adam Mosseri testified that “harms to children, such as sexual exploitation and detriments to mental health, were inevitable on the company’s platforms due to their vast user bases” (this is a cop out AFAIK because their algorithm on Facebook, Instagram, and Threads are really good with honing in your engagement – that similarly could be utilized internally to highlight illicit activity for review IF the priority was actually there). Internal messages and documents along with the testimony from child safety experts (within and outside the company), revealed that Meta repeatedly ignored warnings and failed to fix their platforms to protect kids. Additionally, law enforcement and the National Center for Missing and Exploited Children (NCMEC) also testified that Meta’s reporting of these crimes against children (including child sexual abuse materials or CSAM), was “deficient”.

The trial also highlighted how Meta generated high volumes of junk reports due to the over reliance on AI to moderate its platforms which had the effect of making its reporting useless for law enforcement (officials frustratingly testified that it “meant crimes could not be investigated”). To be fair (IMHO), IF the data models for AI reaches a state where it can successfully identify harmful material beforehand (and saving human moderators from being the initial lines to have to weed through a lot of that psychologically scarring material first, then that would be a good thing — the technology isn’t anywhere near that level though given the priorities of these companies with their training of these LLM’s).

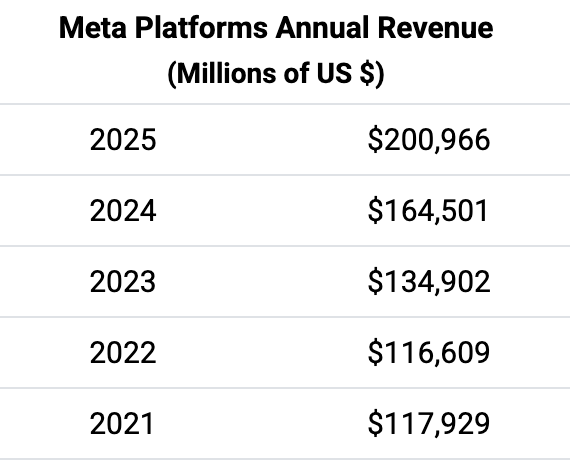

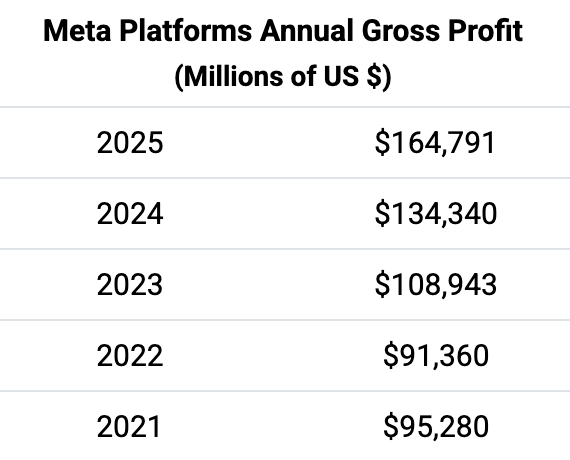

The jury ordered Meta to pay the maximum penalty under the law of $5,000 per violation (resulting in the $375 million in civil penalties for violating New Mexico’s consumer protection laws). While this may look like a huge amount, it is still a tiny part of what Meta made in revenues/profits since 2021.

Just using the timeframe from when The Guardian was doing their investigation (2021-2023), Meta’s annual revenues totaled $369.4 billion while their gross profit totaled $295.6 billion. This civil penalty is a rounding error blip for Meta in the greater scheme. These corporations know this which is why these lawsuits which impose a monetary penalty (often times with max limits), aren’t much of a deterrent (this is especially true when it comes to data breaches and privacy violations).

What is more important to a company like Meta, is they know they are exposed to other similar lawsuits (where this loss provides ammunition for these other cases). In a separate “media addiction” trial (filed against Google/YouTube and Meta in Los Angeles), both companies were found guilty but were fined a laughable $3 million (both companies plan to appeal).

May 4th is the next phase of this trial where Meta could face additional financial penalties and be forced to make changes to its apps. Unfortunately, one of the changes that will likely be ordered is some type of age verification (which is a quagmire because of how such systems have been deployed to date across other sites). Myself, I’velong been an advocate for better parenting and making use of the parental control tools available in many home routers and operating systems (desktop and mobile). Meta also intends to remove the option for end-to-end encryption for DM’s at least on Instagram beginning May 8th (one of the remedies that might be suggested in this next phase is disallowing children from being able to send encrypted messages at all making the timing of this change interesting).

Zuckerberg is part of the problem because his motivations is profit mattering more than the users who use the companies platforms. He has majority control of Meta which makes it difficult to hold him accountable (many key decisions go through him). Some of this greed and moral failures are a reflection of him as CEO (along with the c-suite who similarly think like him). Sadly, most users are fine with continuing to use Facebook, Instagram, Threads, and WhatsApp because a lot of these issues doesn’t affect them directly (these companies know they have a captive audience). Myself, I just could not in good conscience continue being invested in the company AND also having a continued presence on any of their properties.